The past two years have been defined by a relentless pursuit of GPU capacity, fueling massive investments in data centers and ballooning IT budgets. The prevailing narrative justified this expenditure: secure hardware now or risk being left behind in the AI revolution. However, with Gartner estimating AI infrastructure spending to reach $401 billion this year, a stark reality is emerging. Enterprise-wide audits reveal that average GPU utilization is languishing at a mere 5%, indicating a significant inefficiency in deployed resources.

This low utilization is exacerbated by procurement cycles that often make idle GPUs a fixed cost. Many organizations committed to traditional three-to-five-year depreciation schedules for their AI hardware, including the five-year terms common with hyperscale providers. Consequently, infrastructure acquired during the peak of the “GPU scramble” now represents a sunk capital expenditure, regardless of actual usage.

As these assets age, the focus is shifting from initial acquisition to maximizing the economic return from existing deployments. Underutilized GPUs are not merely dormant resources; they are depreciating assets that must now demonstrate tangible value. This necessitates a fundamental shift in strategy: from accumulating capacity to optimizing the performance and cost-efficiency of what has already been implemented.

The GPU Scramble Was a Distraction

For leading enterprises such as Intuit, Mastercard, and Pfizer, the primary bottleneck was rarely the sheer availability of GPUs. Leveraging extensive relationships with cloud giants like AWS, Azure, and GCP, these companies secured significant capacity reservations. However, much of this provisioned power remained underutilized as internal teams grappled with challenges related to data gravity, governance frameworks, and immature architectural designs.

The industry’s emphasis on “scarcity” effectively masked these underlying inefficiencies. While headlines focused on supply chain constraints, the internal reality was a pronounced productivity gap. Organizations were investing heavily in hardware acquisition (activity-rich) but yielding minimal tangible results (output-poor) in terms of functional AI outputs, such as generating useful text tokens.

At a 5% utilization rate, the financial viability is questionable. For every dollar invested in silicon, 95 cents can be considered a contribution to cloud provider revenue rather than direct operational value. In any other business function, a 95% waste metric would be unacceptable; within AI infrastructure, it was often rationalized as “preparedness.”

Q1 Tracker Signals a Market Pivot

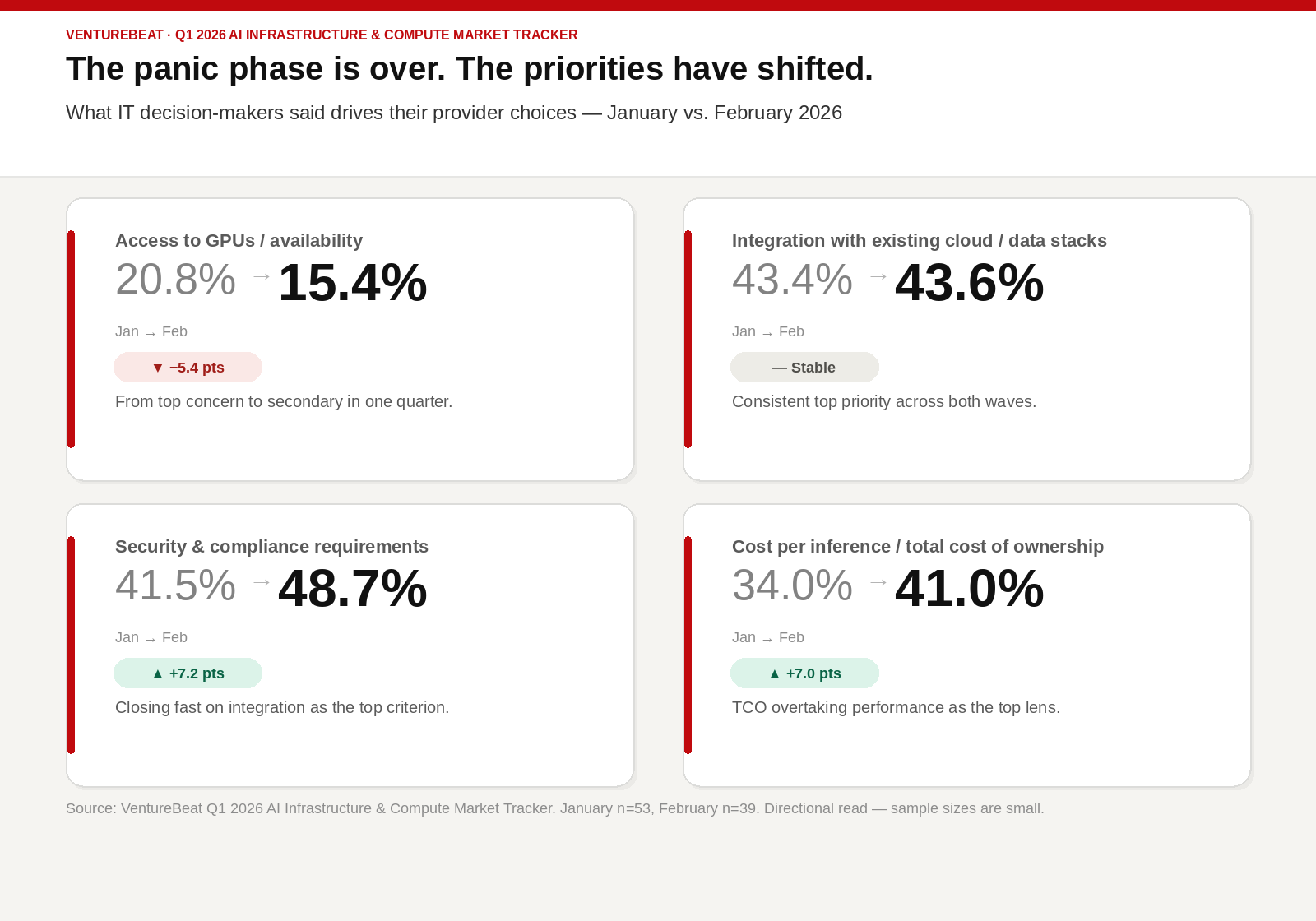

VentureBeat’s Q1 2026 AI Infrastructure & Compute Market Tracker indicates a clear departure from the panic-driven acquisition phase. Although the tracker’s methodology involves a directional survey (53 respondents in January, 39 in February), the consistent pattern across both waves reveals a market undergoing rapid recalibration. When IT decision-makers were asked about their current provider selection criteria, the following shifts were observed:

-

Declining Access Concerns: The emphasis on “Access to GPUs/availability” dropped significantly from 20.8% to 15.4% within a single quarter, moving from a primary concern to a secondary one.

-

Pragmatic Priorities: “Integration with existing cloud and data stacks” remained the top priority, holding steady at approximately 43%. Simultaneously, security and compliance requirements saw a surge, rising from 41.5% to 48.7%, nearly matching integration needs.

-

Total Cost of Ownership (TCO) Mandate: “Cost per inference/TCO” emerged as a dominant procurement factor, jumping from 34% to 41% in one quarter, surpassing performance metrics as the leading consideration.

The era of unchecked capital expenditure for AI infrastructure is drawing to a close. Inference, the process of generating AI outputs, is now the critical cost center that demands rigorous financial scrutiny.

While model training and fine-tuning were often treated as tactical projects, inference represents a strategic business model. For many enterprises, the unit economics of this model are currently unsustainable. Initial pilot phases often benefited from flat-fee licenses and bundled token deals, which inadvertently encouraged architectural inefficiencies. Teams developed complex retrieval pipelines and long-context agents under the assumption that token costs were nominal.

However, as the industry transitions towards usage-based pricing in 2026, these architectures are becoming significant financial liabilities. When metered billing is applied to infrastructure that experiences 95% idle time, the cost per useful token escalates dramatically once a project moves into production, creating an immediate budget crisis.

From Activity to Productivity

The shift highlighted by the Q1 data signifies more than a budget recalibration; it represents a fundamental redefinition of how AI leadership is measured. For the preceding two years, success was defined by the ability to “secure” necessary AI infrastructure. In this new era of efficiency, success is increasingly determined by the capacity to “squeeze” maximum value from that infrastructure.

Consequently, organizations are now prioritizing cost optimization platforms, recognizing that simply acquiring more GPUs is often not the optimal solution. The prevailing question among IT users has evolved from “How do we get more GPUs?” to “How do we stop paying for GPUs we aren’t using?” This marks a critical transition from measuring GPU activity (e.g., number of GPUs powered on) to measuring GPU productivity (e.g., useful tokens generated per dollar spent).

The luxury of underutilization has become a significant liability. The next phase of enterprise AI adoption hinges on making existing silicon investments financially productive. This requires a strategic focus on optimizing inference economics and maximizing the return on investment for deployed AI hardware.

Token Consumer vs. Producer: A Strategic Choice

As enterprises advance from proof-of-concept stages to full production deployment, the focus is intensifying on inference architecture and the economics of token generation, moving beyond mere GPU acquisition. In this evolving economic landscape, every organization faces a crucial decision: will it operate as a token consumer, incurring ongoing costs to model providers, or as a token producer, managing its own infrastructure and controlling unit economics?

This decision transcends cost considerations, impacting how organizations manage operational complexity. Establishing an inference infrastructure requires addressing challenges such as KV cache persistence, storage architecture optimization, latency guarantees, and power constraints. Furthermore, it involves navigating real-world enterprise limitations, including power availability, data center footprint, and operational complexity, all of which directly influence AI scalability.

At the heart of this challenge lies KV cache management. Storing context data in GPU memory is performant but expensive, limiting concurrency and escalating per-token costs. Offloading the KV cache to high-speed NVMe-based storage can improve reuse and reduce prefill overhead, but introduces trade-offs in latency and system design. As NVMe costs fluctuate and GPU memory remains a scarce resource, organizations must meticulously balance performance with efficiency.

For entities aiming to be token producers, mastering these trade-offs across memory, storage, power, and operations is intrinsic to scaling effectively. Conversely, for those where this overhead remains prohibitive, alternative strategies are necessary.

The Specialized Cloud Pivot

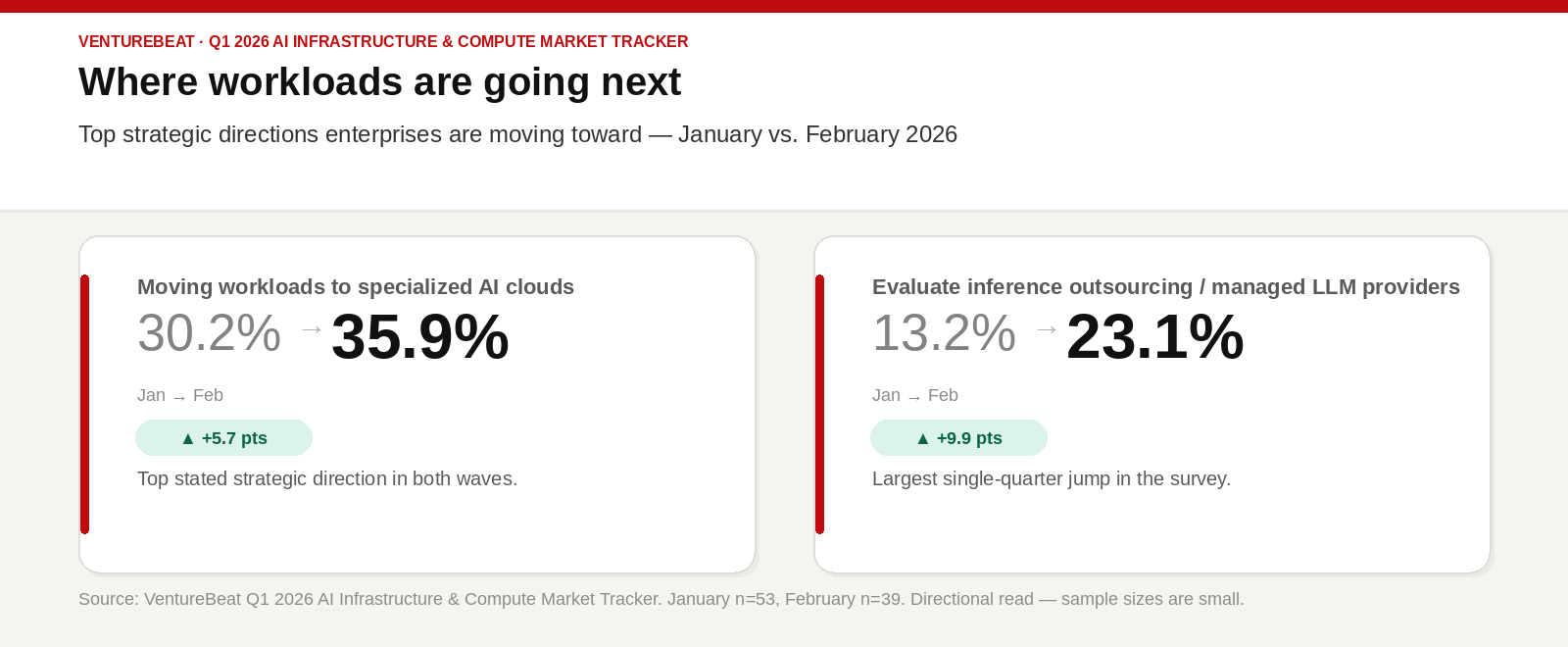

The Q1 tracker data reveals a significant market trend: a growing preference for specialized AI clouds, with enterprises increasingly migrating workloads to these platforms. This category saw its share grow from 30.2% to 35.9% in the latest survey. Providers such as Coreweave, Lambda, and Crusoe are rapidly evolving their offerings. While initially focused on model builders and training-intensive workloads, their revenue mix is shifting, with inference customers now representing 30% of their business, a figure projected to surpass training by the end of 2026.

These specialized providers are attracting strategic attention not merely for offering GPU access, but for streamlining infrastructure complexities. They optimize the entire stack—storage, networking, and scheduling—specifically for inference-first economics, a departure from the general-purpose operational models of traditional hyperscalers. For organizations pursuing token production, these environments offer a more efficient operational framework than conventional cloud providers.

The Rise of Managed Inference

For enterprises recognizing the challenges of building and managing their own inference infrastructure, the outsourcing of inference services is emerging as a viable alternative. The Q1 survey indicated a substantial increase in interest in inference outsourcing and managed LLM providers, rising from 13.2% to 23.1% within a single quarter. This nearly 10-percentage-point growth reflects a growing awareness that internal inference infrastructure development can incur significant hidden costs.

Providers such as Baseten, Anyscale, Fireworks AI, and Together AI offer predictable pricing and service-level agreements, alleviating the need for customers to become experts in vLLM tuning or distributed GPU scheduling. In this model, enterprises remain token consumers, but they strategically offload the complexity of the underlying stack. This approach is proving most effective for organizations where inference volume justifies the operational burden.

Simplifying the Hybrid Stack

The path to becoming a token producer is being facilitated by a new generation of hybrid-cloud AI platforms. Solutions from Red Hat, Nutanix, and Broadcom are designed to operationalize open-source inference infrastructure, reducing the need for extensive systems integration efforts by individual companies. Modern inference relies on complex open-source components like vLLM for high-throughput serving, Triton for model orchestration, and Kubernetes for cluster management. While individually powerful, integrating and optimizing these systems at scale presents a significant challenge.

These newer platforms promise enhanced portability, enabling the development of an inference stack that can be deployed across various environments, including hyperscale clouds, specialized clouds, and on-premises data centers. Interest in these managed, do-it-yourself stacks is growing, with survey responses indicating a rise from 11.3% in January to 17.9% in February. This flexibility is crucial, as enterprise AI deployments will inevitably be distributed based on data location, sensitivity, and cost-effectiveness. The winning platforms in the evolving token economy will be those that facilitate standardization through portability, empowering enterprises to dynamically shift between consumer and producer roles as their needs evolve.

The Architecture of Efficiency: Technical Levers for Productivity

Addressing the pervasive 5% GPU utilization challenge requires more than just software enhancements; it demands a fundamental restructuring of the AI efficiency stack. Many organizations are discovering that high operational activity does not equate to high productivity. A compute cluster can operate at peak capacity yet remain economically inefficient if its time-to-first-token is excessive or if inference requests spend disproportionate time in the prefill stage.

Inference economics are dictated by the volume of valuable output generated per unit of cost. This necessitates a paradigm shift from measuring GPU activity—simply confirming that GPUs are powered on—to measuring GPU productivity. Achieving this productivity hinges on optimizing three critical technical components: the network, memory, and storage subsystems.

Networking: Mitigating the Cost of Latency

The network infrastructure serves as the often-overlooked foundation of efficient inference. In distributed computing environments, the speed of data transfer between compute nodes and storage directly impacts whether a GPU is actively processing or idly waiting. Remote Direct Memory Access (RDMA) has become the industry standard for this data movement, enabling direct data transfer between memory and GPUs, bypassing the CPU and eliminating latency spikes characteristic of traditional network architectures. An RDMA-enabled setup can potentially increase per-GPU output by a factor of ten for concurrent workloads.

Without such advanced networking capabilities, enterprises effectively incur a “waiting tax” on their GPU resources. As model context windows expand and multi-node orchestration becomes standard, the network’s performance becomes the determinant factor in a cluster’s efficiency, transforming it from a high-speed processing facility into a data bottleneck.

Solving the Memory Tax: Shared KV Cache Implementation

With the proliferation of larger models and significantly expanded context windows, the cost associated with repeatedly reconstructing prompt states has become prohibitive. Large language models (LLMs) utilize key-value (KV) caches to maintain conversational context. Traditionally stored in local GPU memory, these caches are both expensive and capacity-limited, creating a “memory tax” that severely impacts unit economics as concurrency increases.

To circumvent this issue, the industry is migrating towards persistent, shared KV cache architectures. By centralizing the cache on high-performance storage rather than distributing it redundantly across multiple GPU nodes, organizations can substantially reduce prefill overhead and enhance context reuse. Emerging architectures are demonstrating this efficacy; for instance, the VAST Data AI Operating System, leveraging VAST C-nodes with Nvidia BlueField-4 DPUs, enables pod-scale shared KV cache, streamlining legacy storage tiers. Similarly, the HPE Alletra Storage MP X10000, validated by Nvidia, is engineered to optimize data delivery to inference resources, minimizing the coordination overhead that causes bottlenecks at scale. WEKA is another prominent player in this domain.

The Compression Advantage

Beyond hardware, algorithmic innovations are redefining inference memory capabilities. Google’s recent presentation of TurboQuant at ICLR 2026 exemplifies this advancement, demonstrating up to a 6x compression ratio for KV caches with no discernible loss in accuracy. Such techniques facilitate the creation of large vector indices with minimal memory footprints and near-zero preprocessing times, enabling higher concurrency on existing hardware and reducing latency spikes caused by rebuild storms.

However, the standardization of compression techniques remains a point of contention, with no universally adopted open-source standard yet established. This evolving landscape suggests a potential proprietary battleground between major players like Google and Nvidia. Consequently, the implementation of these compression strategies may involve proprietary stack dependencies.

Storage as a Financial Decision Driver

Storage is no longer solely a technical consideration; it has become a critical financial decision. Platforms like Dell PowerScale reportedly deliver up to a 19x improvement in time-to-first-token compared to conventional methods, according to Dell’s data. By decoupling high-performance shared storage and memory-intensive data access from scarce GPU resources, these platforms enable more efficient inference scaling. When storage layers can consistently supply data to GPU-intensive workloads, it prevents costly idle periods for valuable compute resources.

In the current efficiency-driven landscape, the objective is to elevate GPU utilization beyond the 5% threshold by ensuring that every processing cycle is dedicated to token generation rather than data movement. However, as the infrastructure becomes more efficient, the security perimeter faces increased exposure. High-productivity tokens lose their value if the underlying data cannot be reliably trusted.

Sovereignty and the Agentic Future: Establishing Trust

The ultimate impediment to realizing substantial returns on AI investments is not a technical constraint but a trust deficit. As enterprise AI applications evolve from basic chatbots to sophisticated autonomous agents, the associated risk profile intensifies. Agents necessitate deep access to internal systems and proprietary intellectual property to function effectively. Without a robust sovereign architecture, this access can create significant liabilities that many organizations are ill-equipped to manage.

VentureBeat’s research into AI governance highlights a critical disconnect: while many organizations believe their AI environments are secure, 72% admit to lacking the level of control and security they perceive. This “governance mirage” poses a substantial risk, particularly as agentic systems transition into production environments. In the past year alone, 88% of executives reported security incidents directly related to AI agents.

Sovereignty as a Foundational Architectural Principle

Data sovereignty is often treated as a compliance checkbox, focused on geographic or regulatory mandates. However, for strategic enterprises, it must be integrated as a core architectural principle, emphasizing the maintenance of control, data lineage, and explainability over the information powering agentic workflows. This requires a refined approach to data maturity, mirroring established frameworks like the medallion architecture. Within this model, data progresses through layers of usability and trust—from raw ingestion at the bronze level to refined gold and, finally, platinum-quality operational data.

AI inference processes must adhere to these rigorous standards. Agentic systems require not only accessible context but also trusted context. Furnishing incorrect data to an agent or exposing sensitive intellectual property through a non-sovereign endpoint introduces both business and regulatory risks. Compartmentalization must be a design imperative from the outset, ensuring clear delineation of which models and agents can access specific data layers, under what conditions, and with documented lineage.

Bringing AI to the Data: A Strategic Imperative

The fundamental question guiding the future of agentic AI is whether to move data to the AI or vice versa. For highly sensitive workloads, migrating data to a centralized model endpoint is often counterproductive. The trend towards private AI—where inference occurs closer to the source of trusted data—is gaining significant traction. This architecture leverages sovereign clouds, private environments, or governed enterprise platforms to maintain data perimeter integrity.

In this context, the decision to operate as a token producer becomes a distinct security advantage. By owning the inference stack, an enterprise can enforce governance and lineage directly at the infrastructure layer, ensuring that the intellectual property used to ground an agent never leaves the organization’s control.

The Next Platform War: Inference Economics and Data Trust

The ultimate battleground for AI dominance will not be decided by the size of GPU clusters, but by the efficacy of inference economics and the robustness of the data foundation. Organizations that prevail in this efficiency-driven era will be those capable of delivering the lowest cost per useful token and the most accelerated path to production deployment. They will be the ones who have successfully moved beyond the speculative phase to focus resolutely on productive output.

Achieving a tangible return on AI investments mandates a cultural and strategic transformation—shifting from a mindset of merely securing the infrastructure stack to actively optimizing (“squeezing”) it for maximum value. This requires architectural discipline, a laser focus on token-level ROI, and an unwavering commitment to data sovereignty. When an organization can generate its own tokens both efficiently and securely, AI transitions from an experimental project to a repeatable, economically advantageous business driver.

This strategic approach is key to realizing genuine ROI and will form the bedrock of next-generation enterprise advantage.

Rob Strechay is a Contributing VentureBeat analyst and principal at Smuget Consulting, a research and advisory firm focused on data infrastructure and AI systems.

Disclosure: Smuget Consulting engages or has engaged in research, consulting, and advisory services with many technology companies, which can include those mentioned in this article. Analysis and opinions expressed herein are specific to the analyst individually, and data and other information that might have been provided for validation, not those of VentureBeat as a whole.

Business Style Takeaway: Enterprises must urgently shift focus from GPU acquisition to optimizing existing AI infrastructure utilization to achieve financial viability. The future success in AI will be dictated by operational efficiency, cost-per-token economics, and robust data sovereignty, rather than sheer hardware capacity.

Source: : venturebeat.com