As large language models (LLMs) grow in sophistication, the temptation to delegate complex knowledge-based tasks—where models process extensive documents and deliver final results—is increasing. However, a critical question remains: how much can we trust these models to remain faithful to the source content through multiple iterative rounds of processing?

New research from Microsoft indicates a significant concern: LLMs can silently corrupt document content as they perform tasks, introducing errors without explicit user intervention. Researchers have developed a novel benchmark to simulate multi-step autonomous workflows across 52 distinct professional domains, providing an automated method to quantify content degradation over time.

The study’s findings are sobering. Even leading-edge, state-of-the-art models degrade approximately 25% of document content by the conclusion of these simulated workflows. Furthermore, equipping models with agentic tools or presenting them with realistic distractor documents surprisingly exacerbates their performance issues.

This research serves as a crucial cautionary note. Despite the growing momentum towards automating knowledge work, current large language models exhibit limitations in reliability for such demanding, extended tasks.

Understanding Delegated Workflows

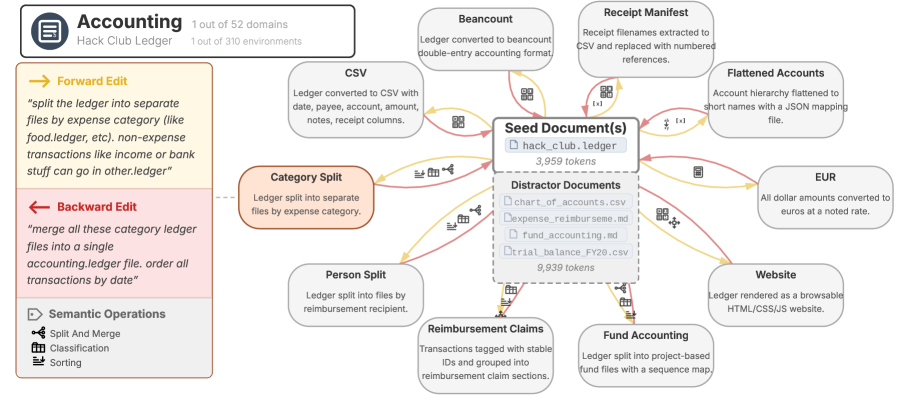

The Microsoft study delves into “delegated work,” an emerging operational paradigm where users grant LLMs the autonomy to execute knowledge tasks by analyzing and modifying documents. A prime illustration of this is in software development, where users delegate code writing and editing to AI. However, the applications of delegated workflows extend far beyond programming into diverse fields. For instance, in accounting, a user might provide a detailed ledger and instruct an LLM to partition it into separate files, each organized by specific expense categories.

The efficacy of this delegation hinges on user trust, particularly given that individuals may lack the time or specialized expertise to meticulously review every AI-driven modification. Users implicitly expect the model to complete tasks accurately, avoiding unchecked errors, unauthorized deletions, or the generation of fabricated information (hallucinations).

To rigorously assess the trustworthiness of AI systems in extended, iterative delegated workflows, the researchers introduced the DELEGATE-52 benchmark. This benchmark comprises 310 distinct work environments spanning 52 diverse professional domains, including financial accounting, software engineering, crystallography, and music notation.

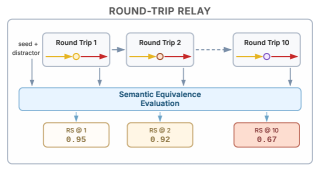

Each work environment is seeded with real-world text documents ranging from 2,000 to 5,000 tokens and includes five to ten complex, non-trivial editing tasks. Quantifying the accuracy of complex, multi-step editing processes typically necessitates costly human review. DELEGATE-52 circumvents this by employing a “round-trip relay” simulation methodology, enabling answer evaluation without requiring human-annotated reference solutions. This approach mirrors the backtranslation technique used in machine translation assessment, where a document is translated by an AI and then back to its original language to gauge fidelity.

Consequently, each edit task within DELEGATE-52 is designed to be fully reversible, pairing a forward instruction with its precise inverse. For instance, an instruction to segment a ledger into distinct files based on expense categories is paired with an instruction to merge these category files back into a unified ledger.

In commentary provided to VentureBeat, Philippe Laban, Senior Researcher at Microsoft Research and co-author of the paper, clarified that this is not merely a test of an AI’s ability to execute an “undo” command. Unlike human workers who retain context, this round-trip evaluation method is uniquely suited for AI. By initiating a fresh conversational session for each task, the researchers compel the model to attempt the inverse operation in complete isolation.

“The models in our experiments do not have awareness of whether a task is a forward or backward step, nor do they understand the overall experimental design,” Laban explained. “They are simply tasked with executing each instruction as thoroughly as possible at every step.”

These roundtrip tasks are concatenated into a continuous relay to simulate long-horizon workflows, involving up to 20 consecutive interactions. To enhance realism, the benchmark introduces distractor files within each task’s context. These files contain 8,000 to 12,000 tokens of topically relevant but irrelevant material, testing the AI’s ability to maintain focus amidst extraneous data.

Evaluating Frontier Models in Simulated Workflows

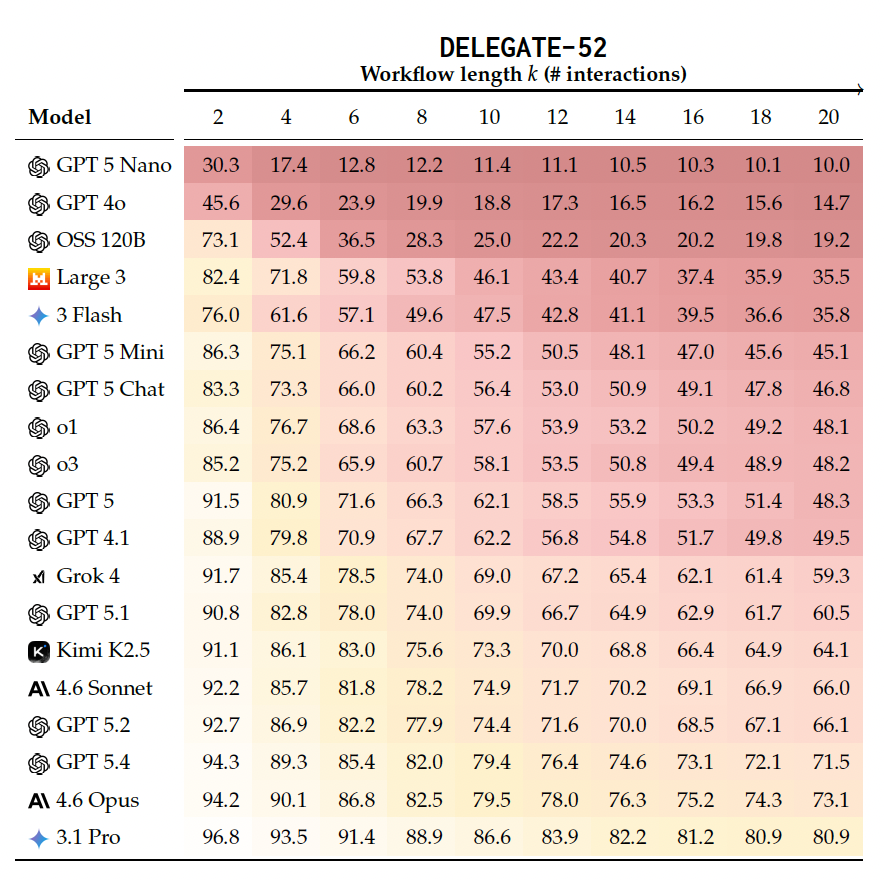

To assess how various model architectures and scales handle delegated tasks, the researchers evaluated 19 distinct language models from prominent providers including OpenAI, Anthropic, Google, Mistral, xAI, and Moonshot. The core experiment subjected these models to a simulation encompassing 20 sequential editing interactions.

Across the evaluated models, documents experienced an average degradation rate of 50% by the simulation’s end. Notably, even the most advanced models, including Gemini 3.1 Pro, Claude 4.6 Opus, and GPT 5.4, exhibited an average content corruption rate of 25%.

The benchmark revealed significant domain specificity. Python programming emerged as the sole domain where the majority of models achieved a near-perfect “ready” status, scoring 98% or higher. Models demonstrated proficiency in programmatic tasks but struggled considerably with natural language processing and specialized domains such as fiction writing, financial statement analysis, or recipe generation. Gemini 3.1 Pro, the top-performing model overall, was deemed suitable for delegated work in only 11 of the 52 tested domains.

An intriguing observation is that the degradation is not primarily a result of gradual error accumulation (“death by a thousand cuts”). Instead, approximately 80% of the total degradation stems from infrequent but significant critical failures—single interactions where a model abruptly omits at least 10% of the document’s content. Advanced models do not necessarily mitigate minor errors more effectively; they tend to postpone these catastrophic failures to later stages of the workflow.

Furthermore, when less capable models falter, their primary mode of degradation involves content deletion. In contrast, frontier models, when they fail, actively corrupt existing content. The text remains present but is subtly distorted or fabricated, making errors considerably more challenging for human oversight to detect.

Interestingly, providing models with an agentic framework featuring generic tools for code execution and file manipulation resulted in diminished performance, increasing average degradation by an additional 6%. Laban attributed this to a reliance on generalized tools rather than domain-specific solutions.

“Models lack the on-the-fly capability to write effective programs that can manipulate files across diverse domains without errors,” he stated. “When they are unable to perform a task programmatically, they resort to reading and rewriting entire files, which is less efficient and more prone to errors.” The solution, he suggests, lies in developing narrowly scoped tools—such as specialized functions for calculations or data entry within .ledger files—to ensure agents remain focused.

Degradation also compounds with increasing document size or the addition of more distractor files in the working environment. For enterprises investing significantly in retrieval-augmented generation (RAG) systems, these distractor documents serve as a direct warning regarding the escalating costs associated with cluttered context. While a noisy context window might initially cause a minor 1% performance drop after only two interactions, this degradation can escalate to a substantial 2-8% drop over a prolonged simulation.

“For the retrieval community, RAG pipelines must be evaluated across multi-step workflows, not solely on single-turn retrieval benchmarks,” Laban advised. “Single-turn measurements systematically underestimate the detrimental impact of imprecise retrieval.”

A Reality Check for the Autonomous Enterprise

The insights derived from the DELEGATE-52 benchmark offer a crucial recalibration of the current discourse surrounding fully autonomous AI agents.

The benchmark’s design also implies a practical operational constraint: as models can maintain accuracy for several steps before encountering a sudden catastrophic failure, incremental human review throughout the process is essential, rather than a single final check. Laban advocates for designing AI applications around short, transparent tasks rather than complex, long-horizon agents. This approach captures the benefits of automation without compromising the integrity of the output.

For organizations aiming to deploy autonomous agents safely, the DELEGATE-52 methodology provides a practical framework for testing internal data pipelines. Laban elaborated, stating that “an enterprise team seeking to adopt this framework would need to develop three core components: (a) a set of reversible editing tasks representative of their specific workflows, (b) a parser capable of converting their domain documents into a structured format, and (c) a similarity function to compare two parsed representations.” Importantly, building parsers from scratch is not always necessary; the Microsoft research team successfully adapted existing parsing libraries for 30 out of the 52 tested domains.

Laban remains optimistic about the trajectory of AI advancement. “Progress is tangible and rapid. Observing the GPT family alone, models have advanced from scoring below 20% to approximately 70% within 18 months,” Laban noted. “Should this rate of improvement persist, models may soon achieve saturated scores on the DELEGATE-52 benchmark.”

However, Laban cautioned that DELEGATE-52 represents a simplified environment compared to vast enterprise-scale operations. Even as foundational models inevitably master this benchmark, the persistent diversity of unique enterprise data and workflows will necessitate ongoing investment in custom, domain-specific tooling to ensure the reliability of autonomous agents.

Business Style Takeaway: The research highlights that current LLMs introduce significant errors in extended, multi-step document processing tasks, undermining their readiness for full automation in knowledge work. Businesses must temper expectations for fully autonomous agents and prioritize robust, iterative validation processes, potentially focusing on shorter, modular tasks rather than complex, long-horizon operations, until model reliability substantially improves.

Original article : venturebeat.com