The ability of artificial intelligence to perceive and interpret visual data, particularly live video feeds, presents a significant opportunity for enterprises across various sectors. Beyond traditional security applications, such AI can automate the extraction of compelling moments from marketing content for social media, identify and flag inconsistencies in video production, or analyze non-verbal cues and actions in research studies and candidate assessments.

While advanced video analysis AI exists, it has not yet reached mainstream adoption. Perceptron Inc., a two-year-old startup, aims to democratize this capability with the launch of its flagship video analysis reasoning model, Mk1. Announced today, Mk1 utilizes a proprietary “multi-modal recipe” developed over 16 months, engineered to tackle the complexities of the physical world. The model’s pricing is a key differentiator, offered via API at approximately 80-90% less than leading competitors like Anthropic’s Claude Sonnet 4.5, OpenAI’s GPT-5, and Google’s Gemini 3.1 Pro, with costs set at $0.15 per million input tokens and $1.50 per million output tokens.

Under the leadership of Co-founder and CEO Armen Aghajanyan, formerly of Meta FAIR and Microsoft, Perceptron has focused on developing AI that can comprehend cause-and-effect, object dynamics, and fundamental physics, advancing beyond mere pattern recognition to a deeper understanding of the physical world.

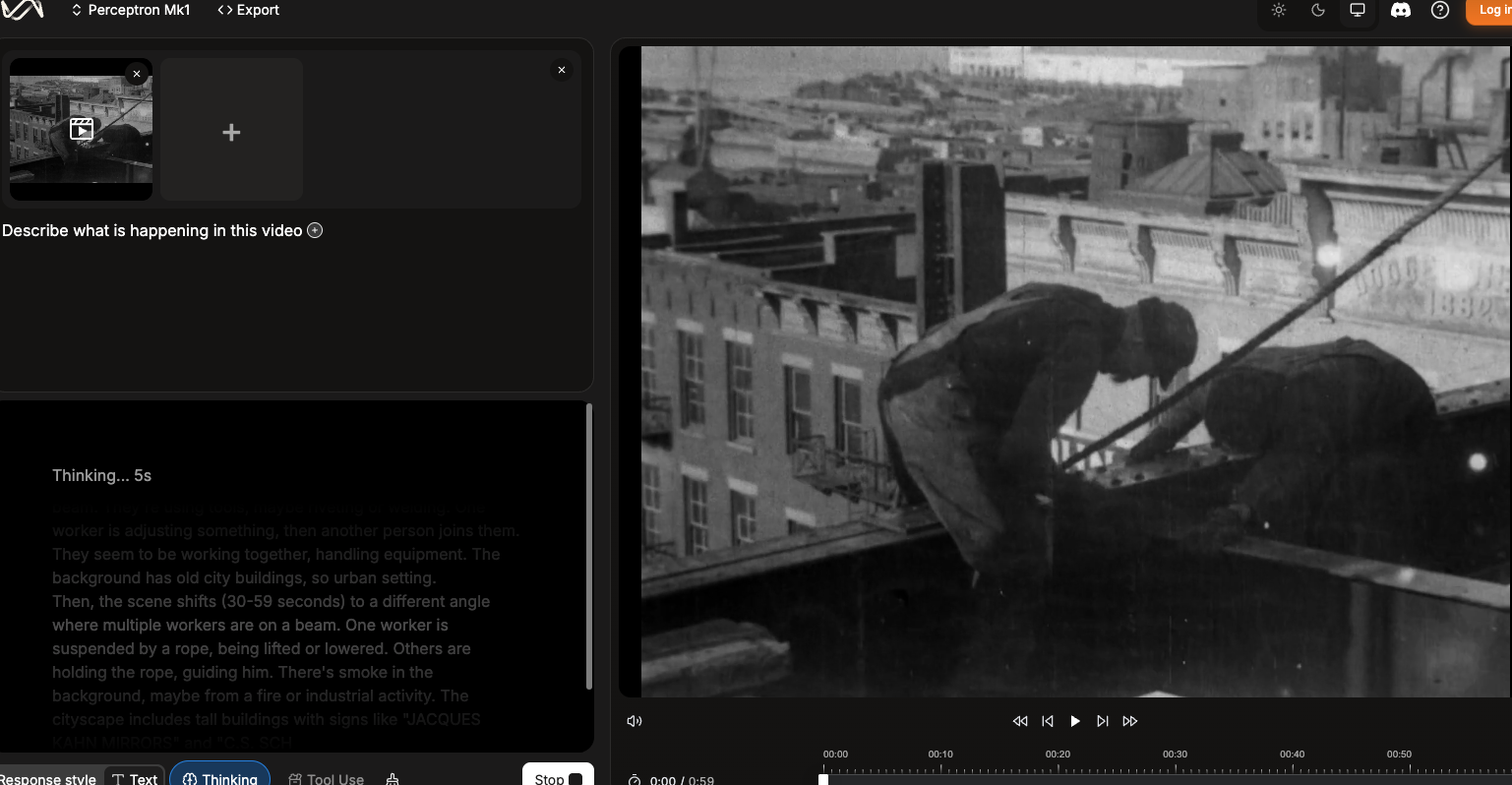

This development marks a significant step towards AI that can reason about physical interactions with the same sophistication it applies to language. A public demo of Perceptron’s capabilities is available for users and potential enterprise clients.

Performance across spatial and video benchmarks

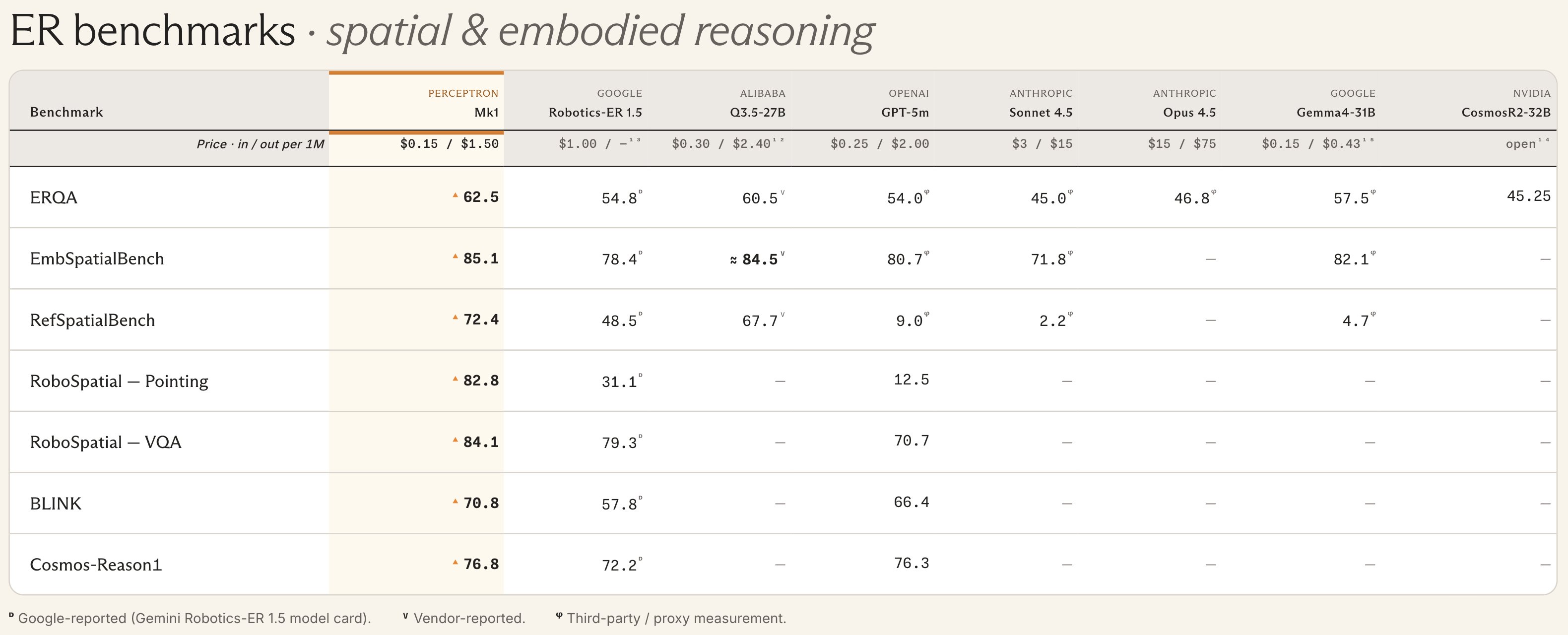

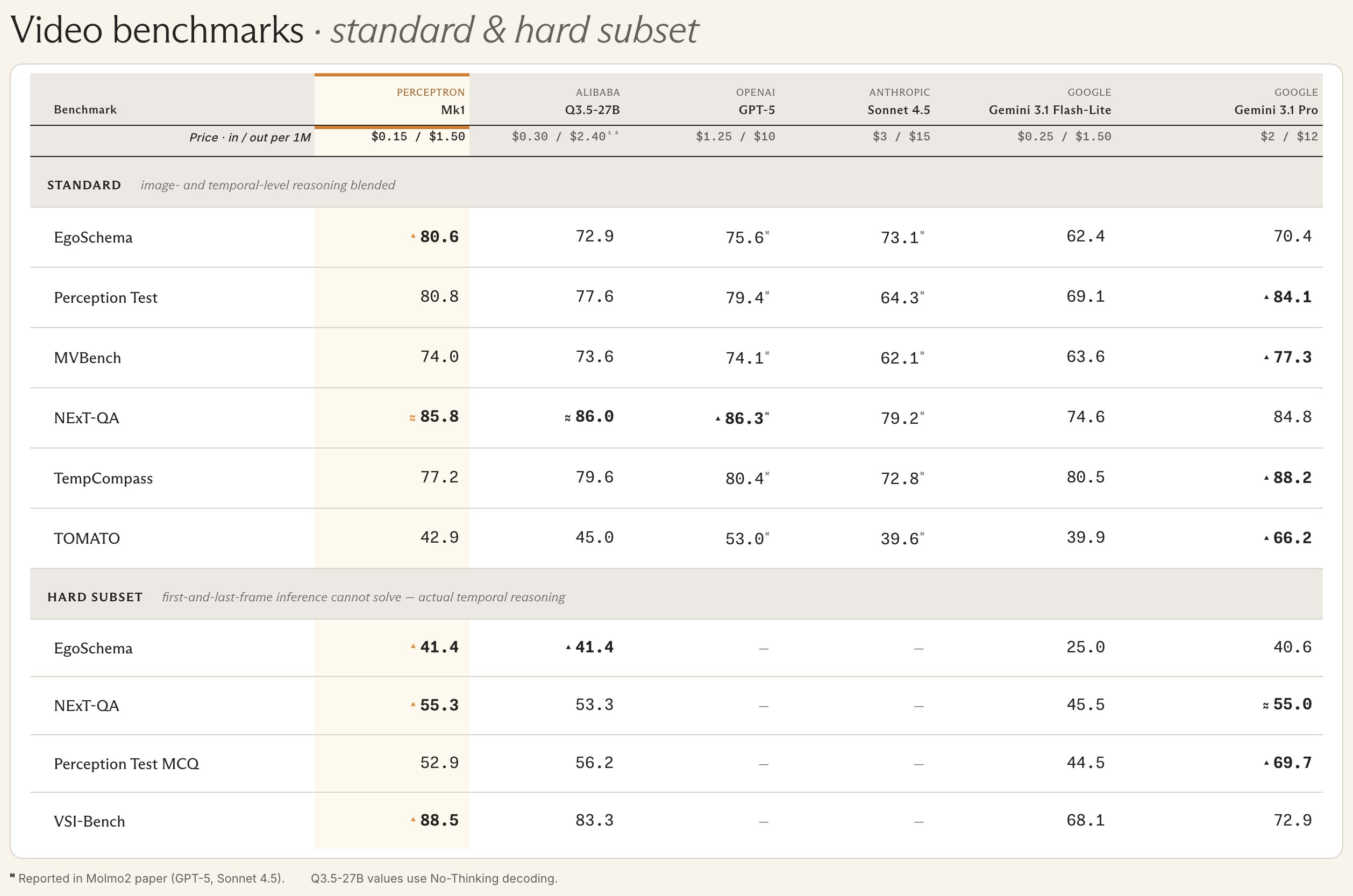

The Mk1 model’s capabilities are validated by its performance on a range of industry-standard benchmarks designed to assess grounded understanding and spatial reasoning.

In spatial reasoning tasks, Mk1 achieved a score of 85.1 on the EmbSpatialBench, outperforming Google’s Robotics-ER 1.5 (78.4) and Alibaba’s Q3.5-27B (approximately 84.5). The model also demonstrated exceptional performance on the RefSpatialBench, with a score of 72.4, significantly surpassing competitors like GPT-5m (9.0) and Sonnet 4.5 (2.2) in referring expression comprehension.

In video benchmarks, Mk1 also showed strong performance. On the challenging “Hard Subset” of EgoSchema, which requires more than just frame-by-frame analysis, Mk1 achieved a score of 41.4, matching Alibaba’s Q3.5-27B and significantly outperforming Gemini 3.1 Flash-Lite (25.0). Its score of 88.5 on the VSI-Bench further confirms its advanced temporal reasoning capabilities.

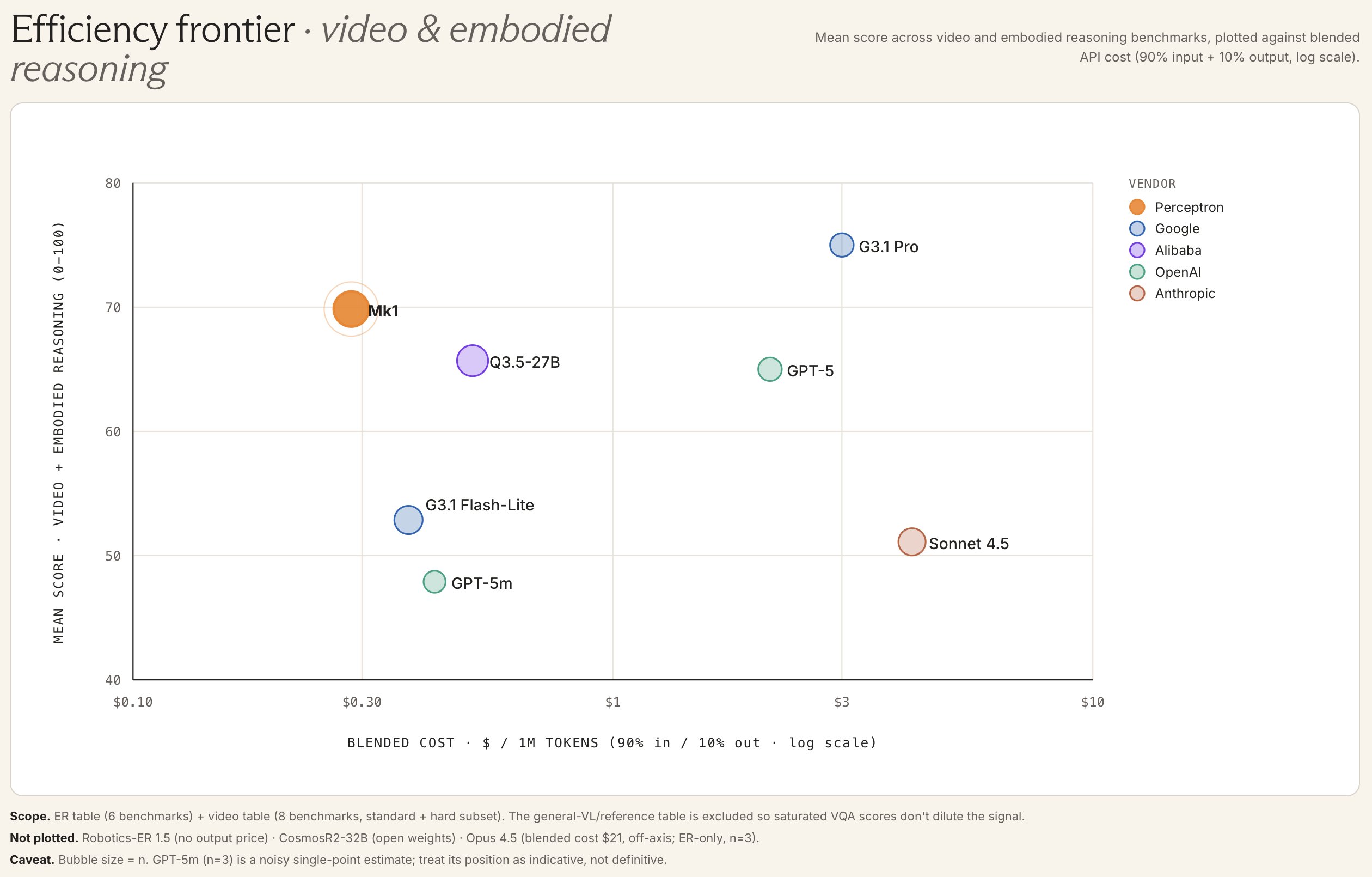

Market positioning and the efficiency frontier

Perceptron is strategically targeting the “Efficiency Frontier,” a market segment defined by models that offer high performance at competitive price points. The benchmark data illustrates that Mk1 delivers performance comparable to leading models like GPT-5 and Gemini 3.1 Pro but at a cost structure typically associated with “Lite” or “Flash” versions.

With pricing at $0.15 per million input tokens and $1.50 per million output tokens, Mk1’s blended cost of approximately $0.30 per million tokens positions it favorably against competitors. GPT-5 has a blended cost near $2.00, and Gemini 3.1 Pro is around $3.00, making Mk1 a significantly more cost-effective solution for large-scale industrial deployment.

This pricing strategy aims to make sophisticated physical AI accessible for widespread industrial applications, moving beyond the realm of academic research.

Architecture and temporal continuity

A key technical feature of Perceptron Mk1 is its ability to process native video at up to 2 frames per second with an extensive 32K token context window. Unlike many existing vision-language models (VLMs) that process video as a series of discrete images, Mk1 is engineered for continuous temporal understanding.

This architectural design enables the model to maintain object identity and track continuity over extended video streams, even through occlusions—a critical capability for applications in robotics and surveillance. Developers can query specific moments within long video sequences and receive precise time codes, simplifying tasks like video clipping and event detection.

Reasoning with the laws of physics

Mk1’s “Physical Reasoning” capability is a significant differentiator, enabling it to understand object dynamics and interactions within real-world physical contexts with high spatial precision. For instance, the model can analyze a basketball game to determine the sequence of events, like differentiating whether a shot was taken before or after a buzzer by processing the ball’s trajectory and the shot clock display.

This goes beyond simple pattern matching, requiring a deep understanding of how objects behave in space and time. The model can perform precise pointing and counting in complex, dense scenes, even reading analog gauges and clocks—tasks that have historically challenged purely digital vision systems.

The model also demonstrates strong general knowledge. In testing, Mk1 accurately described footage from a 1906 New York City skyscraper construction film, identifying workers suspended by ropes and estimating the era from visual cues alone.

A developer platform for physical AI

Perceptron has also launched an expanded developer platform designed to streamline the creation of applications leveraging these advanced perception capabilities. The Perceptron SDK, accessible via Python, includes specialized functions such as “Focus” for automatic region cropping based on natural language prompts (e.g., identifying personal protective equipment), and “Counting” for precise object enumeration in dense scenes.

The platform also supports in-context learning, enabling developers to fine-tune Mk1 for specific tasks with minimal example data, such as training it to identify and label specific types of items within a new scene.

Licensing strategies and the Isaac series

Perceptron employs a dual licensing strategy. The core Perceptron Mk1 model is proprietary and accessed via API, prioritizing enterprise-grade performance and security. Concurrently, the company offers its “Isaac” series as an open-weights alternative.

The Isaac series, starting with Isaac 0.1 in September 2025 and continuing with Isaac 0.2-2b-preview released in December 2025, features open-weight vision-language models optimized for edge and low-latency deployments. While the weights are publicly available on Hugging Face, Perceptron provides commercial licenses for enhanced control and on-premise deployment. The Isaac models are engineered for sub-200ms time-to-first-token latency, making them suitable for real-time edge applications.

Background on Perceptron founding and focus

Perceptron AI, based in Bellevue, Washington, was founded by Armen Aghajanyan and Akshat Shrivastava, both former research scientists at Meta’s Facebook AI Research (FAIR) lab. The company emerged from their work on multimodal foundation models, including Meta’s Chameleon and MoMa projects, which explored early-fusion models for integrating text and images.

Perceptron’s core mission is to advance “physical AI”—models designed to process real-world sensory streams for applications in robotics, manufacturing, security, and content moderation, extending research in multimodal AI to address the complexities of the physical environment.

Partner ecosystems and future outlook

Perceptron’s partner network is already demonstrating the practical applications of Mk1. Use cases include automated highlight clipping for live sports events, leveraging the model’s temporal understanding to identify key moments, and curating teleoperation data for robot training. Other applications involve multimodal quality control in manufacturing for real-time defect detection and context-aware wearable assistants.

Aghajanyan anticipates that these advancements will drive the widespread adoption of “physical AI,” making it as integral to operations as digital AI is today.

Business Style Takeaway: Perceptron’s Mk1 model significantly lowers the cost barrier for advanced video analysis AI, making sophisticated computer vision and temporal reasoning accessible to a broader range of businesses. This breakthrough promises to unlock new efficiencies and capabilities in sectors ranging from manufacturing and logistics to content creation and robotics, potentially redefining operational standards and competitive advantages.

Based on materials from : venturebeat.com