Anthropic has introduced significant enhancements to its Claude Managed Agents platform, unveiling new capabilities designed to bolster AI agent performance and reliability. At its annual Code with Claude developer conference, the company detailed a novel feature called “dreaming,” which enables AI agents to learn from their operational history and progressively refine their performance over time. This development marks a crucial step towards the autonomous, self-improving AI systems that enterprises require for deployment in sensitive production environments.

In addition to “dreaming,” Anthropic has advanced two previously experimental features—outcomes and multi-agent orchestration—from research preview to public beta. These enhancements are now widely accessible to developers building applications on the Claude platform. Collectively, these advancements address critical challenges in scaling AI agents, focusing on accuracy, continuous learning, and the efficient management of complex, multi-step workflows.

Early adopters have reported substantial improvements. The legal AI firm Harvey experienced approximately a sixfold increase in task completion rates after implementing the dreaming feature. Wisedocs, a company specializing in medical document review, achieved a 50% reduction in document review time by leveraging the outcomes feature. Furthermore, Netflix is now employing multi-agent orchestration to process logs from hundreds of simultaneous builds.

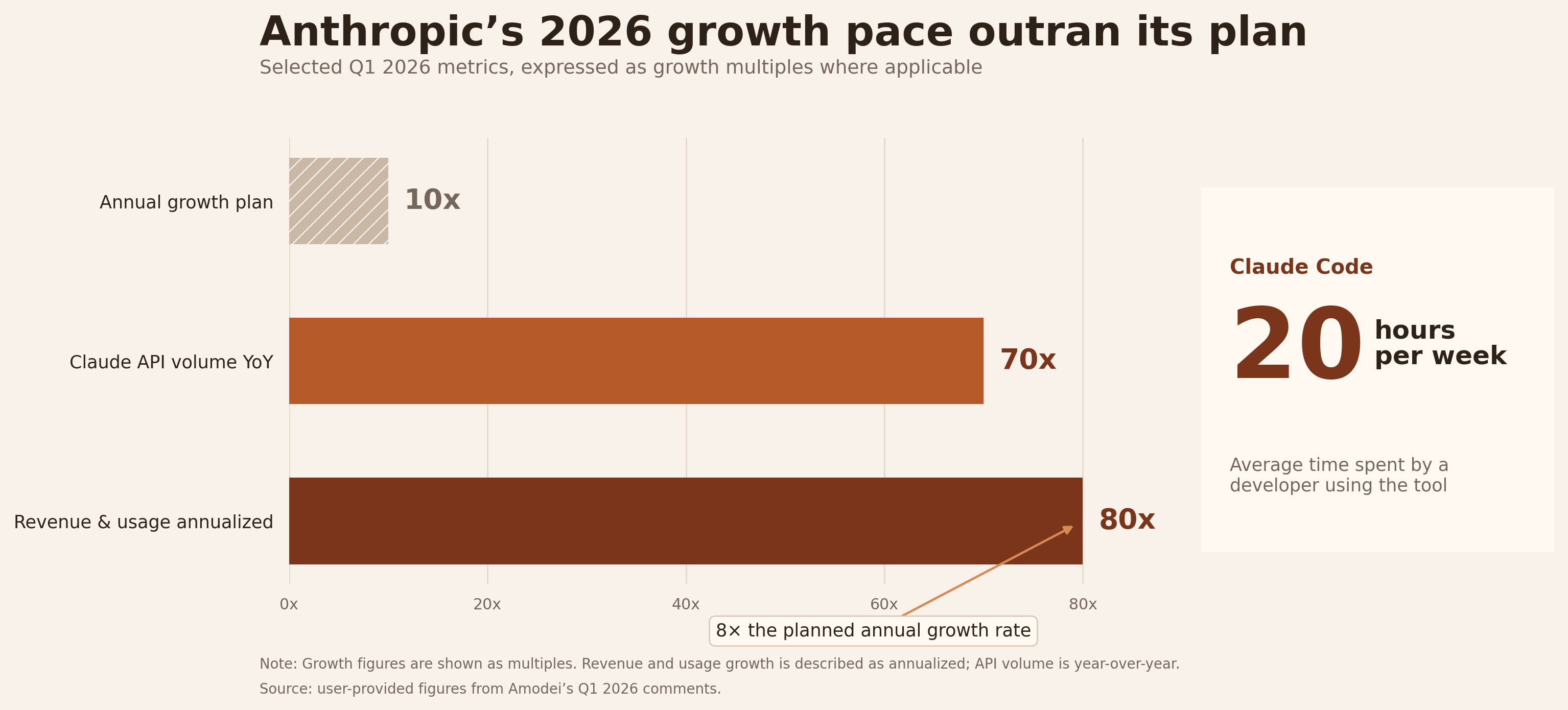

These announcements coincide with a period of remarkable growth for Anthropic. CEO Dario Amodei shared during a fireside chat that the company’s expansion has surpassed even its ambitious internal forecasts. For the first quarter of 2026, Anthropic observed an annualized revenue and usage growth rate of 80x, significantly exceeding its projected 10x annual growth. API volume on the Claude platform has increased nearly 70x year-over-year, with developers actively using Claude Code dedicating an average of 20 hours per week to the tool.

“We had meticulously planned for a scenario of 10x annual growth,” Amodei stated. “However, we experienced 80x growth, which accounts for our challenges with compute resources.”

Anthropic’s “Dreaming” Feature Enhances AI Agents Through Self-Reflection

The “dreaming” capability represents Anthropic’s most innovative contribution, offering a sophisticated approach to AI memory that surpasses conventional session-based context retention. While prior agent memory functions allowed Claude to retain preferences and context within and across individual interactions, dreaming operates at a higher strategic level. This feature systematically reviews an agent’s past sessions and memory stores to identify patterns and curate knowledge, thereby facilitating long-term performance improvement. It uncovers insights that are imperceptible from single-session analyses, such as recurring errors, emergent optimal workflows discovered independently by multiple agents, and shared preferences across an agent team.

Alex Albert, Anthropic’s lead for research product management, elaborated on the concept, drawing parallels to human organizational learning. “When people engage in a workflow with Claude, they often wish to distill the essential path from initiation to completion, especially after iterative refinement,” Albert explained. “Dreaming automates this process. Instead of manually constructing a ‘skill’ from personal experience, the model synthesizes this knowledge, effectively creating an internal playbook for future sessions.”

Significantly, the dreaming feature does not alter the underlying model weights. “Dreaming does not involve direct modifications to the model itself, such as weight updates,” Albert clarified. Instead, the agent externalizes its learnings as plain-text notes and structured “playbooks.” These artifacts are accessible and auditable by humans, ensuring transparency. Addressing concerns about the trust implications of AI agents consolidating their knowledge, Albert acknowledged the need for a degree of trust but emphasized that all memories are inspectable and that advanced models are increasingly adept at managing this consolidation process. “They are learning to generate more effective guidance for their future selves,” he noted.

Live Demonstration Showcases AI Agents Learning and Adapting Overnight Without Human Intervention

During the keynote presentation, Anthropic showcased the practical application of these features through a live demonstration involving a hypothetical aerospace startup, “Lumara,” tasked with autonomously mining lunar resources via drone landings. The setup featured a multi-agent system comprising:

- A Commander Agent: Responsible for overall mission objectives.

- A Detector Agent: Identifying optimal landing zones.

- A Navigator Agent: Managing safe drone flight and landing procedures.

A success rubric was defined, mandating soft landings, clear terrain, and sufficient fuel reserves for a return journey to Earth.

An initial simulation across six potential landing sites yielded promising but imperfect results. To enhance performance, the presenters initiated a dreaming session via the Claude Developer Console. This process allowed the dreaming agent to analyze all prior simulation data, distilling patterns into a comprehensive descent playbook. Subsequent simulations, utilizing this new playbook, demonstrated marked improvements, particularly in areas that had previously underperformed.

“Our role was simplified to merely initiating the process with a button press,” stated Angela Jiang, Head of Product for the Claude Platform, referring to a colleague on stage. “The entire enhancement was driven by dreaming.”

This demonstration effectively illustrated the synergistic integration of the three features. Multi-agent orchestration enabled the division of the complex mission into specialized tasks. Outcomes provided a standardized evaluation framework, allowing a dedicated grader agent to assess performance against the defined rubric. Dreaming then synthesized learnings from these evaluations to refine future execution, establishing a continuous improvement cycle operational without ongoing human oversight.

Anthropic’s “Grader” Agent: A Novel Approach to Quality Assurance in AI Workflows

The outcomes feature, now available in public beta, empowers developers to define success criteria through a rubric—whether for formatting, brand voice, or other specifications. The AI agent then iteratively refines its output to meet these standards autonomously. A key architectural innovation of outcomes is its separation of concerns. Upon task completion by an agent, a distinct “grader” agent evaluates the output against the developer-defined rubric within its own isolated context window. This independent evaluation prevents biases or reasoning patterns from the working agent from influencing the assessment.

When the grader identifies discrepancies between the output and the rubric, it provides specific feedback for revision. The working agent then undertakes another iteration, repeating this loop until all rubric criteria are satisfied, thereby eliminating the need for manual human review at each stage.

Albert characterized Anthropic’s verification strategy as an investment in “additional computational time for testing, allowing multiple models to analyze a problem thoroughly to validate the work of another.” He acknowledged the inherent questions surrounding AI models assessing their own outputs, but noted that a fresh context window is demonstrably more effective at identifying errors than expecting a long-running process to self-correct. “You achieve higher success rates by presenting the output to a new instance of Claude and asking it to identify potential issues,” he explained. He further noted that attention span can degrade in extended sessions—a limitation Anthropic is actively addressing in future model iterations.

This methodology aligns with practices implemented at GitHub. Mario Rodriguez, Chief Product Officer at GitHub, discussed during a separate conference session how Copilot employs a similar advisory pattern, pairing a less complex, more cost-effective executor model with a larger, more capable mentor model. When the executor encounters challenges beyond its capacity, it seeks guidance from the mentor model before resuming its task. Rodriguez reported that this architecture delivers intelligence levels approaching those of premium models at a reduced cost. GitHub strategically integrates critique models at critical junctures in the coding workflow: post-planning, post-implementation of complex features, and pre-execution of tests.

Multi-Agent Orchestration Enables Complex Task Parallelization for AI Agents

Multi-agent orchestration, the third feature transitioning to public beta, allows a primary agent to segment a large task into subtasks. Each subtask is then delegated to a specialized agent, equipped with its own model, system prompt, tools, and independent context window. The Claude Console provides complete traceability for every step, detailing which agent performed the action, the sequence of operations, and the rationale behind them.

This design grants each sub-agent an isolated context, which Anthropic asserts leads to superior outcomes compared to a single agent managing all aspects of a complex task within one processing thread. “Each sub-agent operates with its own independent thread and context window,” the keynote presenters emphasized. “This deliberate separation, followed by result aggregation, yields enhanced performance.”

Albert proposed a guideline for adopting multi-agent architectures: they are particularly advantageous for investigative tasks where extensive data analysis occurs, and much of it may ultimately be discarded. “If the objective is to ascertain a specific piece of information, the extraneous search results are irrelevant; only the answer matters,” he stated. He described the utility of employing ephemeral sub-agents for targeted retrieval operations, channeling only the final result back to the primary thread. Looking ahead, Albert anticipates that models will increasingly manage this parallelization autonomously. “In the future, the distinction between single-agent and multi-agent systems will become less relevant. Users will interact with a unified Claude interface, and the system will automatically deploy the optimal architecture,” he predicted.

Anthropic’s Strategic Focus: Bridging the AI Capability-Adoption Chasm

These platform advancements are integral to Anthropic’s overarching strategy, articulated throughout the conference as a mission to close “the gap between AI capabilities and their practical application for users.” Ami Vora, Anthropic’s Chief Product Officer, underscored this theme in her opening keynote, noting that while AI model capabilities are advancing exponentially, organizational adoption often follows a linear trajectory.

Dianne Penn, leading product for Anthropic’s research team, introduced the concept of “task horizon” as a key metric for progress—referring to the duration an AI agent can operate autonomously while maintaining or improving deliverable quality. “A year ago, agents operated for mere minutes,” she observed. “Today, agents can function for hours continuously. Our future vision includes proactive, perpetually active agents that can identify and undertake tasks without losing context.”

The event also featured infrastructure updates aimed at accelerating developer adoption. Anthropic announced a doubling of its five-hour rate limits across Pro, Max, Team, and Enterprise plans, alongside substantial increases in API rate limits. A partnership with SpaceX was revealed, leveraging the full capacity of its Colossus data center to expand compute availability, directly addressing the supply constraints previously mentioned by Amodei.

All three newly announced features are integrated within Claude Managed Agents. Launched in public beta on April 8, this framework encapsulates best practices for agent development, including memory management, tool integration, and action handling. Anthropic reports that teams utilizing Managed Agents achieve deployment speeds ten times faster than those building custom agent infrastructure. Albert used an analogy to describe the platform’s efficiency: “Managed Agents abstract away the complexities of system setup, much like developing an application for macOS doesn’t require reimplementing the core operating system.”

The Impact of Dreaming, Outcomes, and Multi-Agent Orchestration on Enterprise AI’s Future

The competitive landscape for AI agent platforms is intensifying, with major players like OpenAI and Google vying for developer attention. Anthropic is positioning itself for leadership by prioritizing production reliability over raw model intelligence, a critical factor for securing enterprise investment. The dreaming feature, in particular, pioneers a new approach by enabling agents to systematically analyze their operational history to extract and operationalize reusable knowledge, moving closer to the self-improving systems essential for high-stakes enterprise applications.

The conference highlighted existing deployments at scale. Mercado Libre, Latin America’s largest e-commerce platform, utilizes Claude Code extensively, with 23,000 engineers employing the tool and reviewing over 500,000 pull requests. Their objective is to achieve 90% autonomous coding by the third quarter of this year. Shopify has integrated Claude Code across its engineering, design, product, and data science departments.

Dario Amodei presented an ambitious long-term vision, extrapolating from single agents to multi-agent systems and ultimately to “organizational intelligence”—transforming from “a team of smart people in a room” to “a nation of geniuses within the data center.” He reiterated a prior prediction that 2026 would witness the emergence of the first billion-dollar company founded and led by a single individual. “This has not yet occurred,” he commented, “but we have seven months remaining.”

Dreaming is presently available as a research preview. Outcomes and multi-agent orchestration are in public beta, accessible to all developers on the Claude platform. Whether the remaining seven months will suffice for a solo founder to establish a billion-dollar enterprise remains uncertain, but Anthropic’s latest toolset provides a compelling new set of capabilities for ambitious entrepreneurs.

Business Style Takeaway: Anthropic’s advancements in AI agent development, particularly the “dreaming” feature for continuous self-improvement and robust orchestration capabilities, signal a critical shift towards enterprise-grade reliability. This focus on making AI agents more autonomous, accurate, and scalable addresses key adoption barriers, positioning Anthropic as a strong contender for businesses seeking to integrate AI into mission-critical operations and unlock new levels of productivity.

According to the portal: venturebeat.com